|

5 | 5 | [[Model card]](https://github.com/openai/whisper/blob/main/model-card.md) |

6 | 6 | [[Colab example]](https://colab.research.google.com/github/openai/whisper/blob/master/notebooks/LibriSpeech.ipynb) |

7 | 7 |

|

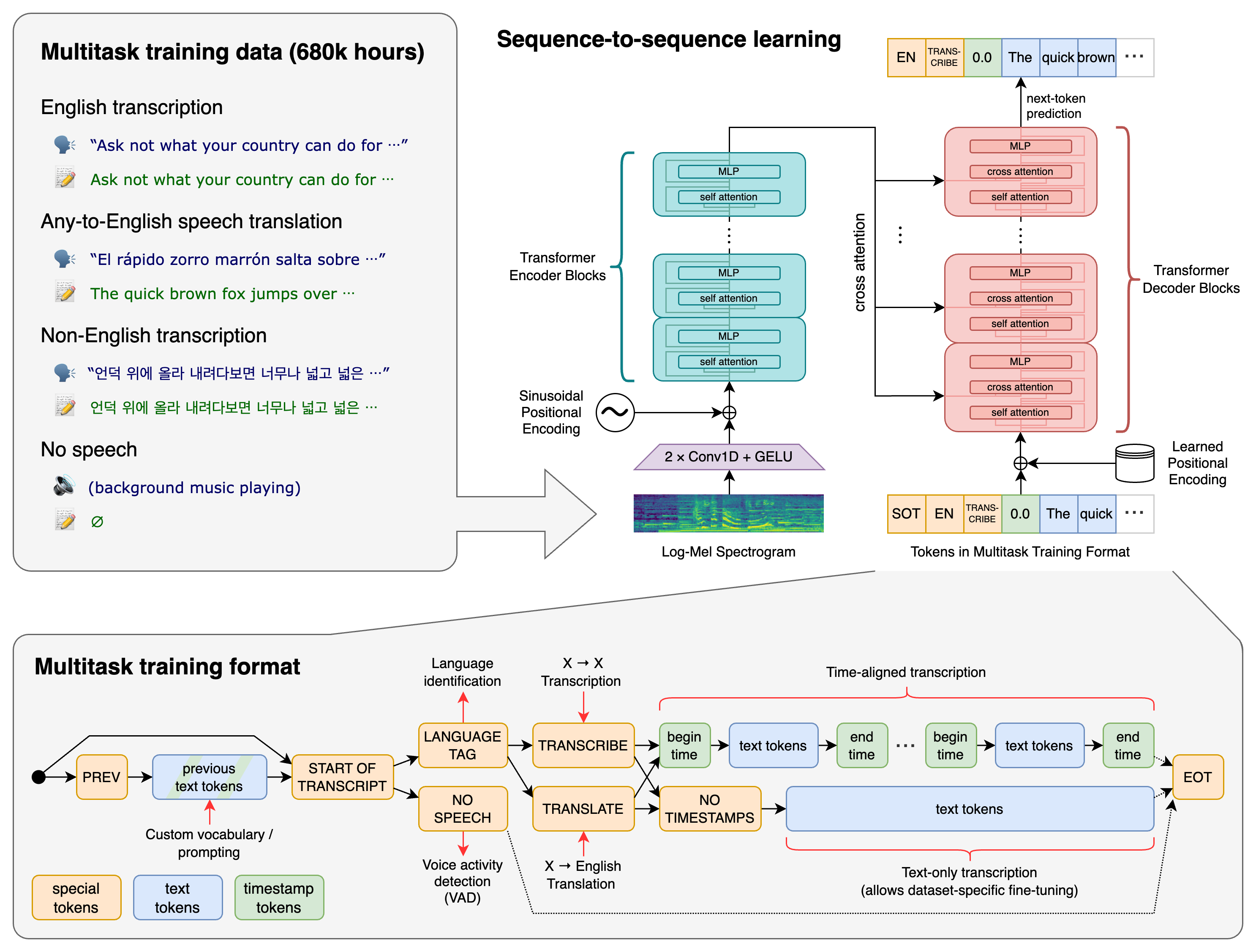

8 | | -Whisper is a general-purpose speech recognition model. It is trained on a large dataset of diverse audio and is also a multi-task model that can perform multilingual speech recognition as well as speech translation and language identification. |

| 8 | +Whisper is a general-purpose speech recognition model. It is trained on a large dataset of diverse audio and is also a multitasking model that can perform multilingual speech recognition, speech translation, and language identification. |

9 | 9 |

|

10 | 10 |

|

11 | 11 | ## Approach |

12 | 12 |

|

13 | 13 |  |

14 | 14 |

|

15 | | -A Transformer sequence-to-sequence model is trained on various speech processing tasks, including multilingual speech recognition, speech translation, spoken language identification, and voice activity detection. All of these tasks are jointly represented as a sequence of tokens to be predicted by the decoder, allowing for a single model to replace many different stages of a traditional speech processing pipeline. The multitask training format uses a set of special tokens that serve as task specifiers or classification targets. |

| 15 | +A Transformer sequence-to-sequence model is trained on various speech processing tasks, including multilingual speech recognition, speech translation, spoken language identification, and voice activity detection. These tasks are jointly represented as a sequence of tokens to be predicted by the decoder, allowing a single model to replace many stages of a traditional speech-processing pipeline. The multitask training format uses a set of special tokens that serve as task specifiers or classification targets. |

16 | 16 |

|

17 | 17 |

|

18 | 18 | ## Setup |

@@ -68,9 +68,9 @@ There are five model sizes, four with English-only versions, offering speed and |

68 | 68 | | medium | 769 M | `medium.en` | `medium` | ~5 GB | ~2x | |

69 | 69 | | large | 1550 M | N/A | `large` | ~10 GB | 1x | |

70 | 70 |

|

71 | | -For English-only applications, the `.en` models tend to perform better, especially for the `tiny.en` and `base.en` models. We observed that the difference becomes less significant for the `small.en` and `medium.en` models. |

| 71 | +The `.en` models for English-only applications tend to perform better, especially for the `tiny.en` and `base.en` models. We observed that the difference becomes less significant for the `small.en` and `medium.en` models. |

72 | 72 |

|

73 | | -Whisper's performance varies widely depending on the language. The figure below shows a WER (Word Error Rate) breakdown by languages of Fleurs dataset, using the `large-v2` model. More WER and BLEU scores corresponding to the other models and datasets can be found in Appendix D in [the paper](https://arxiv.org/abs/2212.04356). The smaller is better. |

| 73 | +Whisper's performance varies widely depending on the language. The figure below shows a WER (Word Error Rate) breakdown by languages of the Fleurs dataset using the `large-v2` model. More WER and BLEU scores corresponding to the other models and datasets can be found in Appendix D in [the paper](https://arxiv.org/abs/2212.04356). The smaller, the better. |

74 | 74 |

|

75 | 75 |  |

76 | 76 |

|

@@ -144,4 +144,4 @@ Please use the [🙌 Show and tell](https://github.com/openai/whisper/discussion |

144 | 144 |

|

145 | 145 | ## License |

146 | 146 |

|

147 | | -The code and the model weights of Whisper are released under the MIT License. See [LICENSE](https://github.com/openai/whisper/blob/main/LICENSE) for further details. |

| 147 | +Whisper's code and model weights are released under the MIT License. See [LICENSE](https://github.com/openai/whisper/blob/main/LICENSE) for further details. |

0 commit comments