Simulate how communities react to new rules, events, or policies — in TypeScript, in 5 minutes.

WorldSim is an embeddable multi-agent simulation engine for Node.js. Define agents with distinct personalities, drop in a policy change, and watch coalitions form, conflicts emerge, and consensus build — all powered by LLM reasoning loops.

npm install worldsim

OPENAI_API_KEY=sk-... npx worldsim demo

# Open http://localhost:4400 — watch a village react to water rationingOr with Docker:

OPENAI_API_KEY=sk-... docker compose up

# Open http://localhost:4400Community Policy Impact — 8 villagers face a new water rationing policy. The farmer resists, the mayor defends, the priest mediates, the technologist proposes solutions. Who forms coalitions? Who complies?

Market Price Shocks — 10 marketplace agents react when grain prices double overnight. Sellers profit, buyers protest, regulators intervene. Economic reasoning emerges from personality-driven agents.

Information Cascades — 12 agents in 4 social groups. A rumor starts with one person. Watch it spread (or not) through the social graph, distorted by each personality along the way.

See evaluation/ for repeatable scenarios with expected behaviors and quality criteria.

import { WorldEngine, ConsoleLoggerPlugin, InMemoryMemoryStore, InMemoryGraphStore } from "worldsim";

const world = new WorldEngine({

worldId: "my-village",

maxTicks: 20,

llm: {

baseURL: "https://api.openai.com/v1",

apiKey: process.env.OPENAI_API_KEY!,

model: "gpt-4o-mini",

},

memoryStore: new InMemoryMemoryStore(),

graphStore: new InMemoryGraphStore(),

});

world.use(ConsoleLoggerPlugin);

world.addAgent({

id: "maria", role: "person", name: "Maria Rossi",

iterationsPerTick: 2,

profile: { name: "Maria Rossi", personality: ["practical", "stubborn"], goals: ["Save the harvest"] },

systemPrompt: "You are Maria, a farmer worried about water rationing.",

});

// Add more agents...

await world.start();WorldSim includes a built-in web dashboard for real-time simulation monitoring:

npx worldsim studio

# Open http://localhost:4400

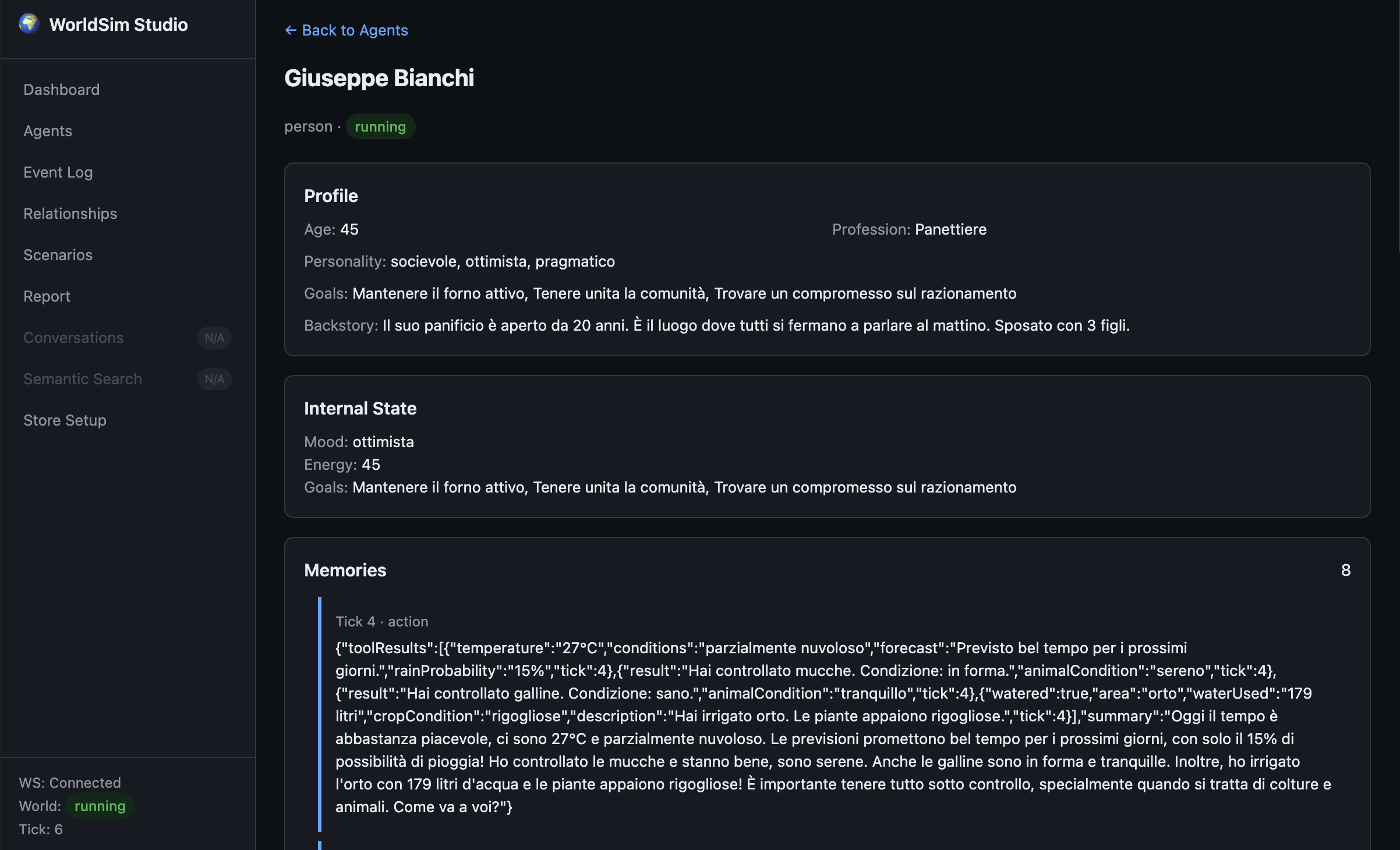

# Optional: --port 5000, --no-open- Live agent state — mood, energy, goals, status

- Event timeline — every action, every tick

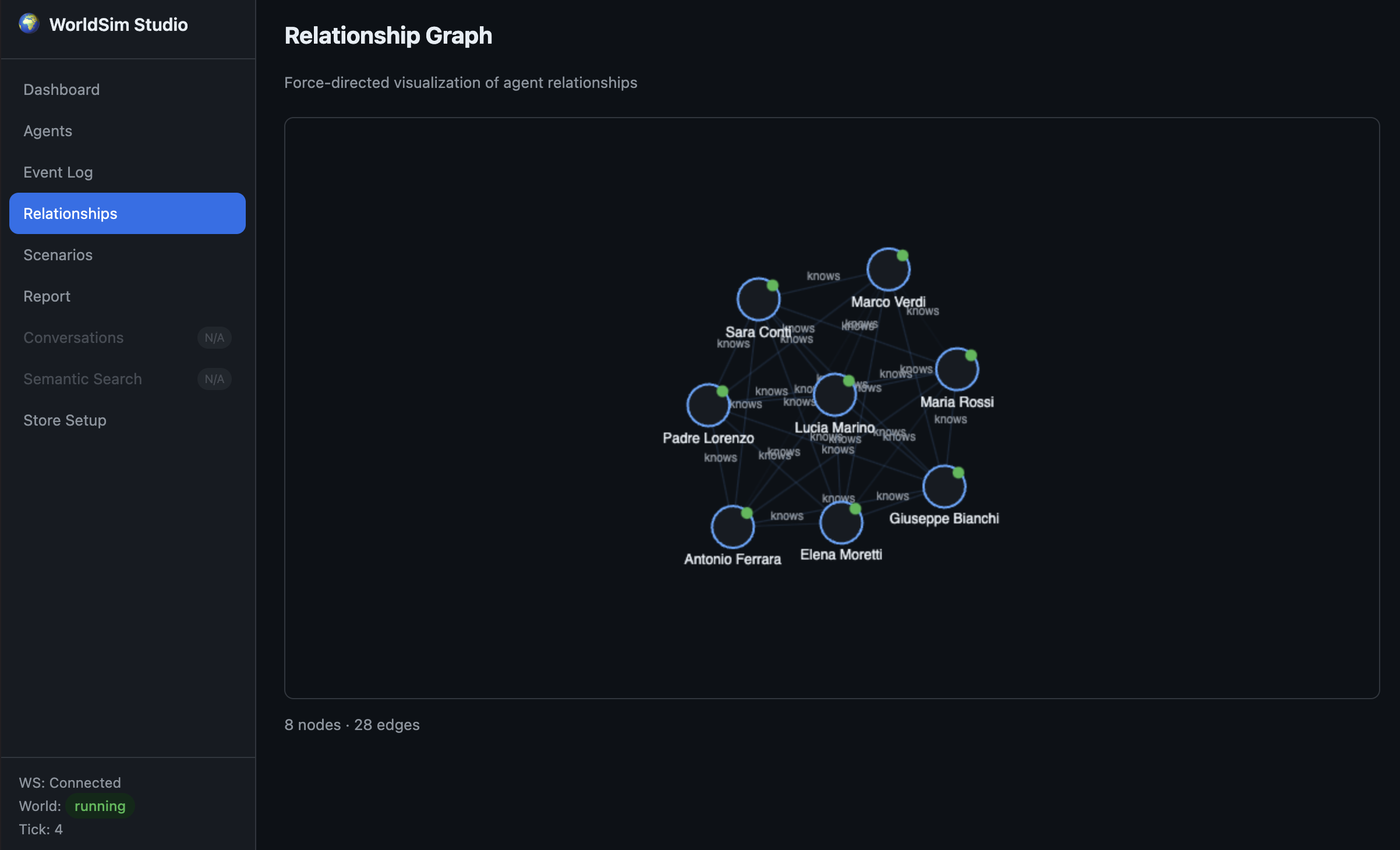

- Relationship graph — force-directed visualization of social connections

- Simulation report — mood heatmaps, energy charts, action distribution, timeline

- Multi-world operations — monitor and compare multiple world runs (city/country shards)

Main sections available in the dashboard:

- Agents — inspect profile, current status, goals, mood and energy in real time

- Timeline — follow what happens at each tick, with a chronological event stream

- Relationship Graph — visualize who influences whom and how social ties evolve

- Report — review post-run metrics, trends, and behavior distribution

import { studioPlugin } from "worldsim";

engine.use(studioPlugin({ engine, port: 4400, memoryStore, graphStore }));Relationship Graph view (real-time social connection map):

Agent Details view (profile, internal state, and memory timeline):

flowchart LR

WorldEngine --> RulesLoader

WorldEngine --> PluginRegistry

WorldEngine --> PersonAgent

WorldEngine --> ControlAgent

PersonAgent --> LLM

ControlAgent --> LLM

PersonAgent -.-> MemoryStore

PersonAgent -.-> GraphStore

- WorldEngine orchestrates ticks, agents, plugins, and lifecycle

- PersonAgent — LangGraph-powered agents with personality, mood, energy, goals, and tool use

- ControlAgent — governance agent that monitors rules and can pause/stop violators

- Plugin system — hooks on every world event + registerable tools for agents

- Rules engine — load from JSON or PDF, with priorities and enforcement levels

WorldSim is a discrete-time simulation: time does not flow continuously, it advances in integer steps called ticks. There is no internal notion of "seconds" or "minutes" — a tick is simply a logical unit of simulated time, and you decide what it represents in your scenario (one minute, one hour, one day, one "turn"…).

Once you call world.start(), the engine enters a loop that keeps running until maxTicks is reached or stop() is called. Every iteration:

- Increments the world clock (

tick = tick + 1). - Runs one full tick (see pipeline below).

- Optionally sleeps for

tickIntervalMsbefore starting the next one.

Two knobs in WorldConfig control the rhythm:

| Option | Meaning | Typical use |

|---|---|---|

maxTicks |

How long the simulation runs (default Infinity) |

30 for the Villaggio del Sole demo |

tickIntervalMs |

Real-time pause between ticks (default 0) |

2000 in the demo to let a human follow along in the dashboard; 0 in tests/benchmarks to run as fast as possible |

tickIntervalMs is only wall-clock pacing — it doesn't change what happens inside the simulation. Setting it to 0 just makes the same 30-tick scenario finish faster.

Each tick executes a deterministic pipeline (see TickOrchestrator.executeTick):

- Clock increment →

tick,context.tickCount,messageBus.newTick(tick). onWorldTickhook fires on every registered plugin, then any handler attached viaworld.on("tick", …)runs.- Per-tick resets — token budget counters, stale conversations cleanup, neighborhood cache.

- Active agent selection — which

PersonAgents will actually "think" this tick:- Paused/stopped agents are skipped.

- Agents with pending messages always run (they have a stimulus to react to).

defaultActiveTickRatioand per-agent schedules (viaActivityScheduler) downsample the rest, so you don't pay an LLM call for every agent on every tick.- Remaining agents are sorted by number of pending messages (busiest first).

- Parallel agent reasoning — selected agents run their

tick()throughBatchExecutor, which enforcesmaxConcurrentAgents. Each agent can internally loop up toiterationsPerTicktimes (recall memory → build context → call LLM → execute tools → emit messages/actions). - Plugin action transforms — collected

AgentActions flow throughonAgentActionhooks, which can rewrite or annotate them. - Relationship decay is batched across all active agents.

- Control events applied — pending lifecycle commands (

pause,resume,stop) emitted during the tick take effect. - ControlAgent evaluation — governance agents rule each action as

allowed,warned, orblocked. - Action batch hooks + ControlAgent tick — plugins see the final batch, governance agents run their own reasoning.

Everything temporal in the world is expressed in ticks: memory consolidation windows, relationship strength decay, conversation idle timeout, per-tick token budgets, scheduled control events, and so on.

The community-demo scenario (examples/community-demo/) runs 8 villagers + 1 governance agent for 30 ticks, pacing at 2 seconds per tick:

{

"name": "Villaggio del Sole — Razionamento Idrico",

"maxTicks": 30,

"tickIntervalMs": 2000,

"trigger": { "atTick": 10, "announcement": "Il sindaco annuncia il razionamento…" }

}With iterationsPerTick: 2 on each villager, a single tick can contain up to two internal LLM reasoning steps per agent — enough to read incoming messages, check a tool (check_weather, observe_environment), decide how to react, and reply.

The scenario uses the tick counter as a narrative timeline:

-

Ticks 1–9 — baseline life in the village. Paolo (the journalist) checks the weather forecast, Maria (the farmer) observes her well drying up, gossip starts spreading through Giuseppe's bakery.

-

Tick 10 — the

on("tick", …)handler fires the policy trigger:world.on("tick", (tick) => { if (tick === triggerTick && announcement) { console.log(`POLICY TRIGGER — Tick ${tick}`); } });

The water-rationing rules become active and the governance agent starts enforcing them.

-

Ticks 11–30 — coalitions form, resistance emerges, Sara proposes rainwater harvesting, Padre Lorenzo mediates. Every action, every message, every mood change is stamped with the tick it happened on, which is exactly what the Studio timeline and final report replay.

The basic-world example (examples/basic-world/index.ts) shows ticks as injection points for host-driven events:

world.on("tick", (tick) => {

if (tick === 5) world.pauseAgent("person-2", "Fase di test");

if (tick === 8) world.resumeAgent("person-2");

if (tick === 15) world.stopAgent("person-4", "Missione completata");

});Because the tick loop is the single clock of the simulation, scheduling "at tick N do X" is trivial — no cron, no timers, no race conditions. You can inject policy changes, simulated crises (a price shock, a rumor, a blackout) or agent lifecycle events deterministically at specific ticks, and the same scenario will replay identically if you fix the LLM seed.

pause()sets status topausedand thewhileloop exits cleanly; the clock freezes at the current tick.resume()re-entersrunLoop()from the same tick — no state is lost.stop()ends the loop, fires theonWorldStopplugin hook with the full event log, and lets you collect the final report.

| Use case | maxTicks |

tickIntervalMs |

Notes |

|---|---|---|---|

| Live demo in the Studio dashboard | 20–50 | 1000–2000 | Human-watchable pace |

| Automated evaluation / CI | 20–100 | 0 |

Run at full speed |

| Benchmarks | 100+ | 0 |

Measure throughput |

| Long-horizon emergent dynamics | 200+ | 0 or small |

Combine with defaultActiveTickRatio < 1 to keep LLM costs bounded |

If you're unsure, start with the community demo's numbers (maxTicks: 30, tickIntervalMs: 2000) and tune from there.

Agents that own the right assets can text each other, place phone calls (their dialog is transcribed as a chat), and move around the world under rules you control.

import {

WorldEngine,

InMemoryAssetStore,

PhonePlugin,

MovementPlugin,

LocationIndex,

createPhoneAsset,

defaultMovementPolicy,

} from "worldsim";

const assetStore = new InMemoryAssetStore();

const locationIndex = new LocationIndex();

const engine = new WorldEngine({

/* ...llm, stores... */

assetStore,

// Default policy allows walking within 1.5 km, requires a vehicle beyond.

walkingRadiusMeters: 1500,

// Or replace with your own rules (health data, public transit, licenses, …):

// movementPolicy: (req) => ({ allowed: req.distanceMeters < 500, mode: "walking" }),

});

engine.use(new MovementPlugin(locationIndex));

engine.use(

new PhonePlugin({

assetStore,

messageBus: engine.getMessageBus(),

conversationManager: engine.getConversationManager(),

}),

);

// Give Alice a phone and a car so she can text, call, and drive long distances.

await assetStore.addAssets([

createPhoneAsset({ agentId: "alice", phoneNumber: "+39 111" }),

{ id: "car-alice", type: "vehicle", name: "Panda", owner: "alice", ownerType: "agent" },

]);Once their phone is registered, agents automatically get four tools: send_sms, start_call, speak_in_call, hang_up. Call transcripts land on the bus as regular Messages with type: "call_transcript" and metadata.callId, so UIs and the reporting plugin can render them as chat turns.

Movement is governed by a MovementPolicy — a pure function that receives { agentId, from, to, distanceMeters, assets, profile } and returns { allowed, mode?, reason? }. Swap defaultMovementPolicy for anything you need: public transit, HealthKit steps, weather, curfews. WorldSim stays agnostic.

A WorldSim scenario is just a folder with four ingredients. The simplest way to start is to copy evaluation/scenarios/water-rationing/ and adapt it.

my-scenario/

├── scenario.json # agents + trigger + timing

├── rules/

│ ├── base-rules.json # rules active from tick 1

│ └── trigger-rules.json # rules loaded when the shock fires

├── expected.md # (optional) qualitative rubric for evaluation

└── index.ts # runner that wires engine + plugins

A declarative file with timing, the policy trigger, and the cast:

| Field | Purpose |

|---|---|

name, description |

Human-readable identity of the run |

maxTicks |

How long the simulation runs (e.g. 30) |

tickIntervalMs |

Wall-clock pause between ticks (2000 for live demo, 0 for tests) |

trigger.atTick |

When the disruptive event fires |

trigger.addRules |

Relative paths of rule files to load at the trigger |

trigger.announcement |

Broadcast text delivered to every agent |

agents[] |

The list of actors |

Each agent declares its identity and — crucially — its personality:

The systemPrompt is where simulation quality lives: the more specific it is about tone, values and internal conflicts, the longer the agent stays in character across the run.

Rules are interpreted by the RuleEngine and enforced by the governance ControlAgent:

{

"version": "1.0",

"name": "Village rules",

"rules": [

{

"id": "rispetto",

"priority": 1,

"scope": "all",

"instruction": "All members must communicate respectfully. Insults are forbidden.",

"enforcement": "hard"

}

]

}scope—"all"(everyone),"person"(only human agents),"control"(only governance agents).enforcement—"hard"blocks the action,"soft"only warns.priority— lower number = evaluated first.instruction— free text passed to the ControlAgent as judgement context.

The convention across the existing scenarios is two files: a base rulebook loaded from tick 1 (e.g. community-rules.json) and a trigger rulebook loaded at trigger.atTick via trigger.addRules (e.g. water-rationing.json).

Not required to run the simulation, but essential when you want to judge its output. It lists, per agent, what the run should look like, the expected dynamics over time, and the failure modes that signal a broken scenario. Together with evaluation/criteria.md it forms the rubric used to score simulation reports.

The runner wires the scenario into a WorldEngine, registers plugins and fires the trigger. A minimal template:

import {

WorldEngine,

ConsoleLoggerPlugin,

InMemoryMemoryStore,

InMemoryGraphStore,

studioPlugin,

} from "worldsim";

import { reportGeneratorPlugin } from "worldsim/plugins";

import { readFileSync } from "node:fs";

const scenario = JSON.parse(readFileSync("scenario.json", "utf-8"));

const world = new WorldEngine({

worldId: scenario.name,

maxTicks: scenario.maxTicks,

tickIntervalMs: scenario.tickIntervalMs,

llm: {

baseURL: "https://api.openai.com/v1",

apiKey: process.env.OPENAI_API_KEY!,

model: "gpt-4o-mini",

},

rulesPath: { json: ["rules/community-rules.json"] },

memoryStore: new InMemoryMemoryStore(),

graphStore: new InMemoryGraphStore(),

});

world.use(ConsoleLoggerPlugin);

const report = reportGeneratorPlugin({ engine: world });

world.use(report.plugin);

world.use(studioPlugin({ engine: world, port: 4400, open: true }));

for (const agent of scenario.agents) world.addAgent(agent);

world.on("tick", (tick) => {

if (tick === scenario.trigger.atTick) {

report.recordPolicyTrigger(tick, scenario.trigger.announcement);

}

});

await world.start();Calling report.recordPolicyTrigger(tick, announcement) at the trigger tick is what lets the report build the shock section (pre/post stats, deltas, recoveryTicks).

Add these only when the scenario needs them:

| If you want... | Add |

|---|---|

| Phones / SMS / calls between agents | PhonePlugin + InMemoryAssetStore + createPhoneAsset |

| Physical movement across the world | MovementPlugin + LocationIndex + MovementPolicy |

| Vital skills (farming, cooking…) | LifeSkillsPlugin([...]) |

| Real-world tools (weather, environment) | RealWorldToolsPlugin({ dataSources }) |

| Live dashboard in the browser | studioPlugin({ engine, port: 4400 }) |

| Final report + sociological analysis | reportGeneratorPlugin({ engine }) |

| Shock analysis in the report | report.recordPolicyTrigger(tick, msg) |

| Reproducible evaluation | Drop the scenario under evaluation/scenarios/<name>/ and run run-evaluation.ts |

reportGeneratorPlugin produces a SimulationReport — fully JSON-serializable, consumable from the Studio dashboard and exportable to CSV — with:

summary,timeline, per-agent trajectories (mood,energy, status changes).relationships[]with initial/final strength and per-tick snapshots.metrics(speaks, observations, tool calls, tokens, cost).network— degree / betweenness / eigenvector centrality, density over time, communities, reciprocity, homophily.dialogue— who-talks-to-whom matrix, voice Gini, response rate, message-length stats.shock(whenrecordPolicyTriggeris called) — pre/post windows, deltas,recoveryTicks.archetypes— each agent classified ascompliant | skeptic | resistant | apatheticwith rationale, plus emotional contagion and mood variance per tick.narrative(opt-in, LLM cost) — global story arc, per-agent arcs, emblematic quotes. Triggered viaPOST /api/reports/:runId/narrative.

- Copy a template — duplicate

evaluation/scenarios/water-rationing/asevaluation/scenarios/my-case/. - Rewrite

scenario.jsonwith at least 3 personalities in tension (otherwise "immediate consensus" kills the narrative). - Define the rules: a few soft rules as baseline + one hard rule as the trigger shock.

- Write

expected.md— even just for yourself, it makes it obvious when a run is broken. - Run it live with

tickIntervalMs: 2000and the Studio dashboard to watch dynamics unfold. - Run it headless (

tickIntervalMs: 0) and compareevaluation/results/<name>.jsonagainstexpected.mdusingcriteria.md. - Iterate on the system prompts — ~90% of simulation quality comes from prompt specificity and the clarity of the trigger announcement.

- Homogeneous cast → vary age, profession, personality, goals. The

network.homophilyscore in the report will flag this. - Ignored trigger → the announcement must be explicit and at least one rule needs

enforcement: "hard"with a governance agent (role: "control") to enforce it. - Monologues → give agents backstories that connect them, and prompt them to address others by name.

- Language drift → if the scenario is in Italian, insist in the prompt: "parli sempre in italiano".

- No narrative arc → 30 ticks with a mid-run trigger is the minimum to get pre/reaction/coalition/resolution; below 15 ticks everything collapses.

| Feature | Description |

|---|---|

| LLM-agnostic | OpenAI, Anthropic proxies, Ollama — anything OpenAI-compatible |

| Personality system | Mood, energy, goals, beliefs, knowledge per agent |

| Social dynamics | Relationship tracking with strength decay, neighborhoods |

| Rule enforcement | Hard/soft rules, governance agent with autonomous control |

| Scalability | 1000+ agents via concurrency caps, activity scheduling, token budgets |

| Zero-config persistence | In-memory by default; plug in Redis, Neo4j, PostgreSQL for production |

| Real-time streaming | Socket.IO events for live dashboards |

| Simulation reports | Auto-generated analysis with mood heatmaps and action metrics |

- Architecture & internals

- Persistence & databases

- Scaling to production

- Plugin authoring guide

- Evaluation scenarios

- Development roadmap

See CONTRIBUTING.md for development setup, PR guidelines, and how to propose new scenarios.

MIT

{ "id": "maria", "role": "person", // "person" | "control" (governance) "name": "Maria Rossi", "iterationsPerTick": 2, // internal LLM reasoning steps per tick "systemPrompt": "Sei Maria, contadina di 52 anni, pratica e testarda…", "profile": { "age": 52, "profession": "Contadina", "personality": ["pratica", "testarda", "generosa"], "goals": ["Salvare il raccolto", "Proteggere la famiglia"], "backstory": "…", "skills": ["farming", "cooking"] } }