(delete this for feature requests)

Client

PubSub, latest version in Go.

From Gopkg.lock:

[[projects]]

digest = "1:cab4bd3c2a4dfa2a4a04c72db04fb47dfcffdd55e131fcf0a81ff5379b07d181"

name = "cloud.google.com/go"

packages = [

".",

"compute/metadata",

"iam",

"internal",

"internal/optional",

"internal/trace",

"internal/version",

"pubsub",

"pubsub/apiv1",

"pubsub/internal/distribution",

"storage",

]

pruneopts = "UT"

revision = "264def2dd949cdb8a803bb9f50fa29a67b798a6a"

version = "v0.46.3"

Describe Your Environment

Docker container based on golang:1.12.6-alpine3.10 running in GKE.

Setup

I have published ~500k messages to PubSub, each message is JSON of size ~ 400-500 bytes. Subscription deadline is 10min.

Now, I launch go pubsub client with the configuration as follows:

ctx := context.Background()

pubsubClient, err := pubsub.NewClient(ctx, settings.ProjectId)

subscriber := pubsubClient.Subscription("not-relevant")

maxWorkers := 4

subscriber.ReceiveSettings.NumGoroutines = maxWorkers

subscriber.ReceiveSettings.MaxOutstandingMessages = maxWorkers

subscriber.ReceiveSettings.MaxOutstandingBytes = maxWorkers * 512

err = subscriber.Receive(ctx, func(ctx context.Context, m *pubsub.Message) {

defer m.Ack()

// do something, on average it takes 500ms, never more than 5 seconds

})Expected Behavior

At most 4 parallel go routines are processing the messages, pulling at most 4 messages at a time from PubSub. OR keeping only 4 * 512B stand-by messages in memory All messages are processed properly with ACKs in-time.

Actual Behavior

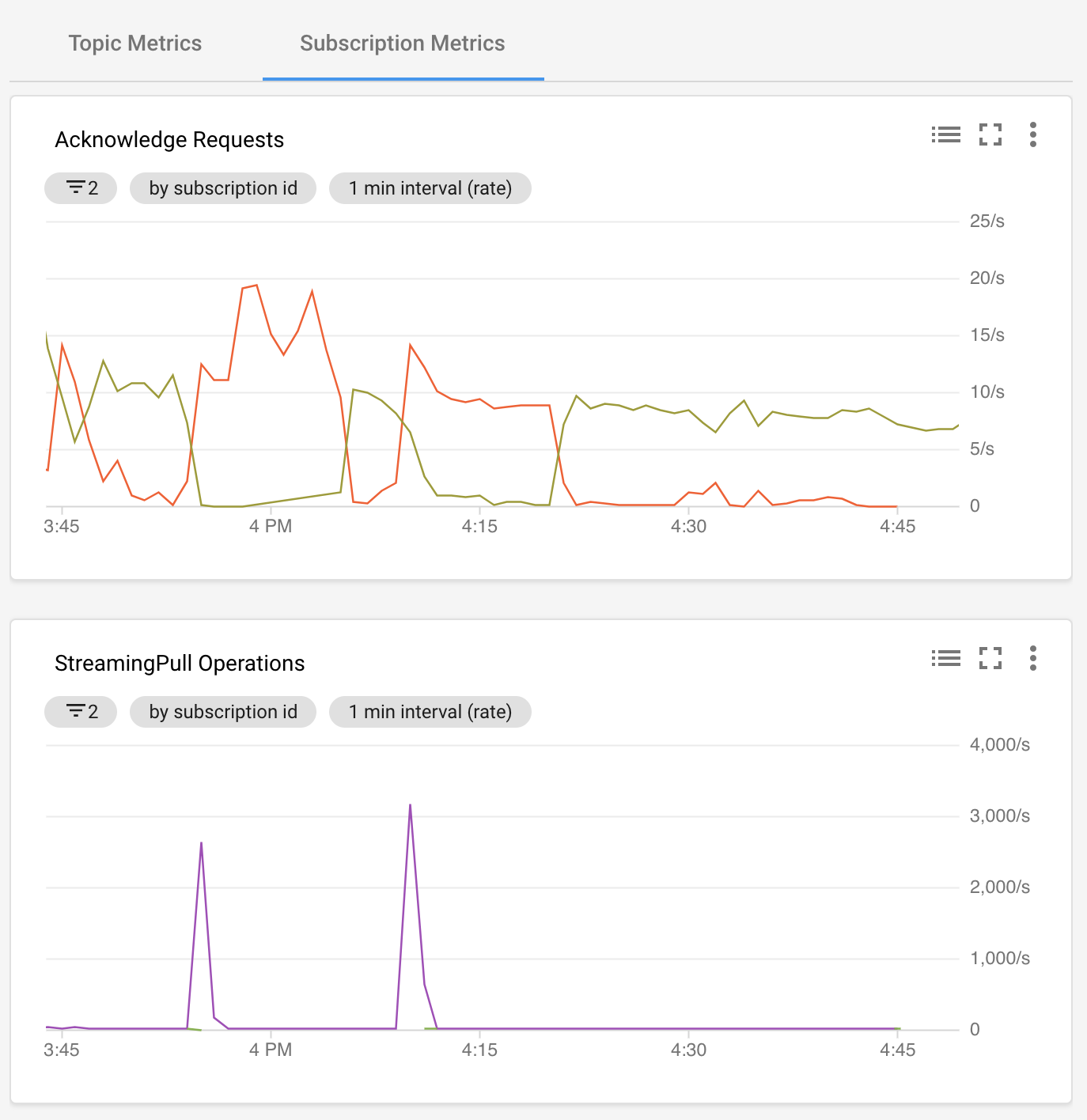

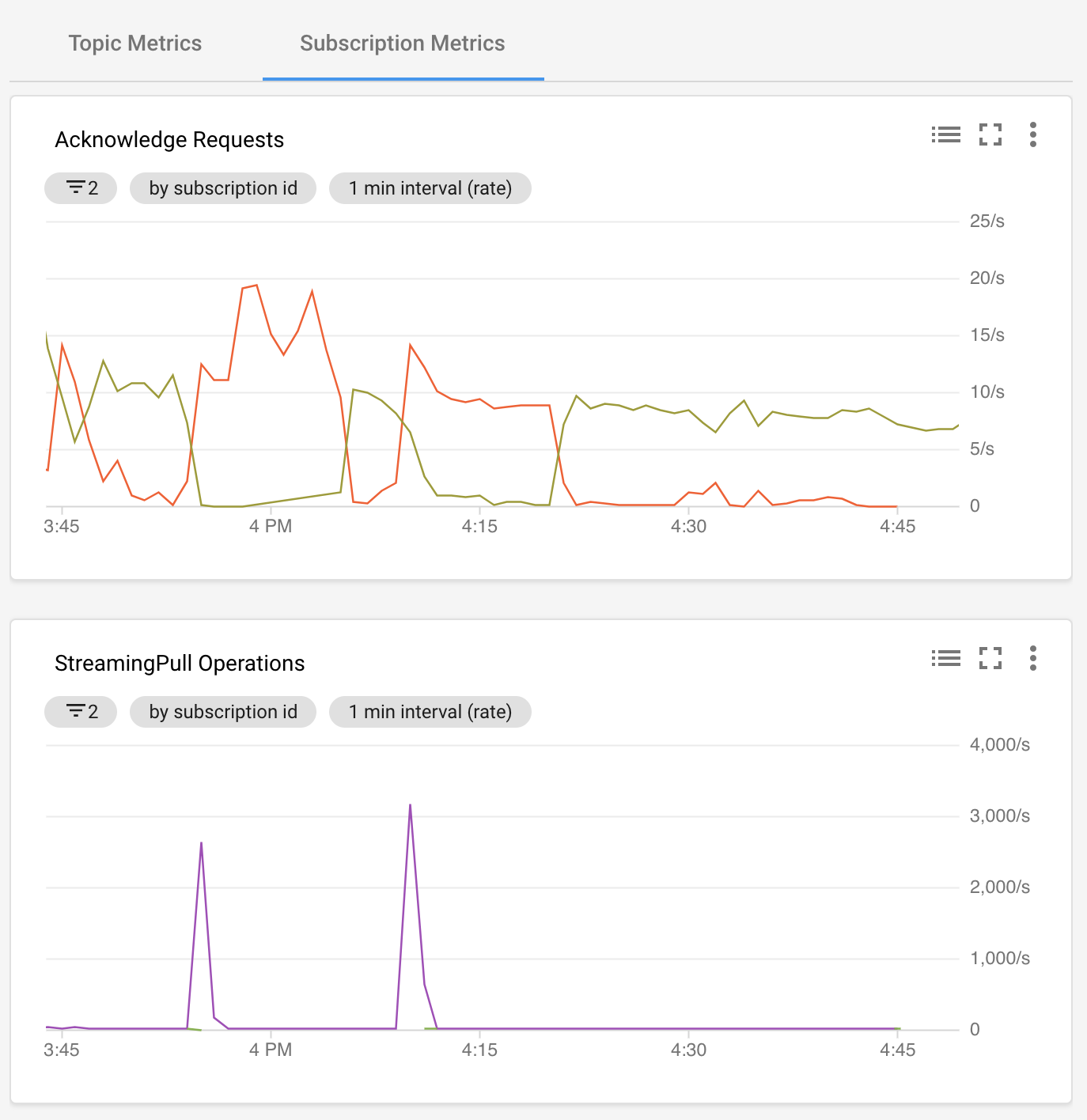

I suspected that my callback will be called in at most 4 parallel go routines and it actually is. The problem is that messages are processed in inconsistent rate and after 10min (coincidence? I don't think so! ), metrics show that most of messages that are processed result with expired ACK and the processing is stuck in a loop with old messages, yielding my processing a waste of time and resources.

Moreover, you can see spikes in the StreamingPull Operations. Those spikes happend when my container was deployed to the cluster (with the exact configuration showed above). How do the MaxOutstandingBytes and MaxOutstandingBytes parameters influence processing? The documentation for those parameters leaves much to be desired.

GREEN is expired ACK, ORANGE is valid ACK

This is such a trivial case, why the go library makes it so difficult to implement?

(delete this for feature requests)

Client

PubSub, latest version in Go.

From Gopkg.lock:

Describe Your Environment

Docker container based on

golang:1.12.6-alpine3.10running in GKE.Setup

I have published ~500k messages to PubSub, each message is JSON of size ~ 400-500 bytes. Subscription deadline is 10min.

Now, I launch go pubsub client with the configuration as follows:

Expected Behavior

At most 4 parallel go routines are processing the messages, pulling at most 4 messages at a time from PubSub. OR keeping only 4 * 512B stand-by messages in memory All messages are processed properly with ACKs in-time.

Actual Behavior

I suspected that my callback will be called in at most 4 parallel go routines and it actually is. The problem is that messages are processed in inconsistent rate and after 10min (coincidence? I don't think so! ), metrics show that most of messages that are processed result with expired ACK and the processing is stuck in a loop with old messages, yielding my processing a waste of time and resources.

Moreover, you can see spikes in the StreamingPull Operations. Those spikes happend when my container was deployed to the cluster (with the exact configuration showed above). How do the

MaxOutstandingBytesandMaxOutstandingBytesparameters influence processing? The documentation for those parameters leaves much to be desired.GREEN is expired ACK, ORANGE is valid ACK

This is such a trivial case, why the go library makes it so difficult to implement?